Although not a research area itself, we also use computational modeling to simulate human behavior during complex cognitive tasks. These simulations allow us to test various theories and performance strategies by comparing simulated data to observed human data. This approach complements traditional experimentation. In particular, we use the EPIC computational cognitive architecture (Kieras & Meyer 1997).

Computational Modeling Using the EPIC Architecture

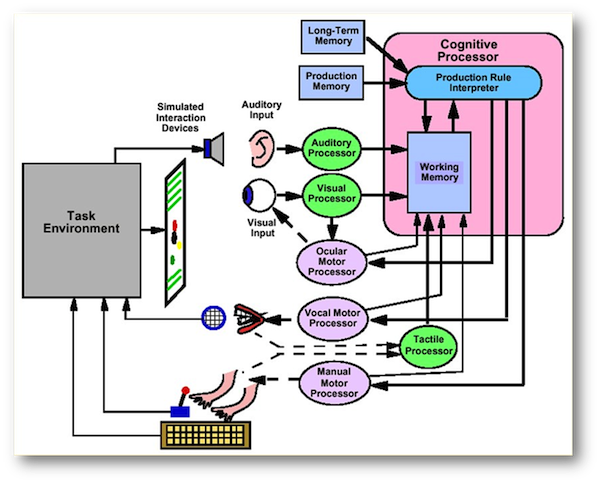

EPIC has been designed to combine mechanisms for cognitive information processing and perceptual-motor activities with procedural task-analyses of cognitive performance. EPIC has a central cognitive processor surrounded by peripheral perceptual and motor processors. Applying EPIC to model the performance for a task requires specifying both the production-rule programming of the cognitive processor and the relevant operations of the perceptual and motor processors. The EPIC framework includes not only software modules for simulating a human performer, but also provisions for simulating the performer’s interaction with external equipment. For example, the left side of Figure 1 shows a simulated task environment, where virtual devices such as a display screen and keyboard provide the “physical” interface to the “performer” on the right. During simulations with EPIC, the task-environment software module assigns physical locations to the interface objects, and it generates simulated visual and auditory events in response to the simulated performer’s behavior. When the simulation is run, EPIC interacts dynamically with this task environment and produces an explicit sequence of overt serial and parallel actions required to perform the task.

EPIC has separate perceptual processors with distinct temporal properties for several major sensory (e.g., visual, auditory, and tactile) modalities. These processors are simple “pipelines” through which information feeds forward asynchronously and in parallel. Each stimulus input to a perceptual processor may yield multiple symbolic outputs that are deposited in working memory as time passes. A common type of perceptual input is the visual stimulus. In EPIC, a visual stimulus in the external world is represented internally as a psychological “object-file” of visual properties that define the object. For example, an object-file can have a variety of “properties,” including color, size, and global or relative spatial location. The complete array of properties associated with an object-file depends on the exact nature and configuration of the external stimulus, and which of those features are salient or relevant to the observer. For example, visual objects which are text strings have a “label” property that serves to identify the verbal nature of the stimulus and may be used to access associated information in memory.

In EPIC, it is possible to detect the presence of a visual object and yet be unaware of many of its relevant properties. For example, the color of an external object may not be available in visual working memory until 100 ms after the stimulus has been detected. After this period, a “color” property is added to the object-file representing the stimulus, and thus is available to satisfy the conditions of any production rules conditional on stimulus color. This delay in access to the color property fits the time-course of nerochemical processes in the visual pathways that result in a conscious awareness of an object’s color. Processing durations for psychological properties are established from corresponding psychological and physiological data.

EPIC also includes separate processors for several major motor (e.g., ocular, manual, and vocal) modalities, all of which operate simultaneously. To operate a motor processor, the cognitive processor sends it a command that contains the symbolic name for a desired type of movement and its relevant parameters (e.g., PUNCH LEFT INDEX). Then, the motor processor produces a simulated overt movement of its effectors, achieving the specified temporal and spatial characterizes for this movement. Feedback pathways from the motor processors and effectors to partitions of working memory help coordinate multiple-task cognitive performance.

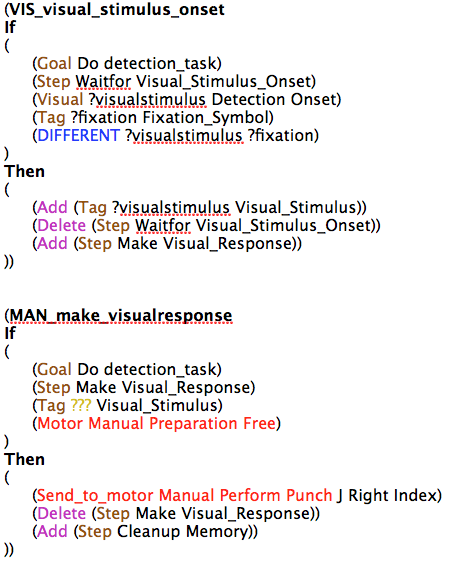

EPIC’s cognitive processor is programmed in terms of production rules, and it uses the Parsimonious Production System interpreter [2]. PPS production rules have the format ( IF THEN ). The rule condition refers only to the contexts of the production-system working memory. The rule actions can add or delete items in working memory, and also send commands to the motor processors. The following production rules illustrate some of EPIC’s possible working memory items and production-rule actions:

The cognitive processor operates cyclically, consistent with known periodicities in the human information-processing system [3]. At the start of each cycle, the contents of working memory are updated with new outputs from the perceptual processors and the actions of applicable rules on the preceding cycle. At the end of each cycle, any motor commands are sent to the appropriate motor processors.

Similarly, inputs from the perceptual processors are accessed only intermittently, whenever the production-system working memory is updated at the start of each cycle. Thus, the cognitive-processor cycles are not synchronized with external stimulus and response events, but operate on a stochastic cycle with a mean of 50 ms [4].

Most traditional production-system architectures allow only one production rule to be fired at a time, and its actions are then executed [5]. Thus, when more than one rule has conditions that match the current contents of working memory, some kind of conflict-resolution mechanism must choose which rule to fire. For example, in the SOAR architecture [4], production rules only propose candidate operators and a separate process must then decide which particular candidate to apply.

In contrast, the Parsimonious Production System of EPIC’s cognitive processor has a very simple policy: On each processing cycle, PPS fires all rules whose condition match the current contents of working memory, and PPS executes all of their actions. Thus, EPIC models have true parallel cognitive processing at the production-rule level, and multiple “threads” or processes can be represented with sets of rules such that they all run concurrently. The assumptions and theory embodied in EPIC have been strongly supported by numerous empirical [6,7,8] and applied [9,10] models. Their findings demonstrate its ability to predict and explain reaction time data from basic and complex multiple-task paradigms. A more complete description of EPIC’s perceptual, motor and cognitive processors, as well as theory and motivation for the architecture can be found in Kieras and Meyer (1997) [1].

References

1. Meyer, D. E., & Kieras, D. E. (1997). A computational theory of executive cognitive processes and multiple-task performance: I. Basic mechanisms. Psychological Review, 104(1), 3-65.

2. Bovair, S., Kieras, D. E., & Polson, P. G. (1990). The acquisition and performance of text-editing skill: A cognitive complexity analysis. Human-Computer Interaction, 5(1), 1-48.

3. Kristofferson, A. B. (1967). Attention and Psychophysical Time. Acta Psychologica, 27, 93-100.

4. Newell, A. (1990). Unified theories of cognition. Cambridge, MA: Harvard University Press.

5. Anderson, J. R., & Matessa, M. (1997). A production system theory of serial memory. Psychological Review, 104(4), 728-748.

6. Schumacher, E. H., Seymour, T. L., Glass, J. M., Fencsik, D. E., Lauber, E. J., Kieras, D. E., et al. (2001). Virtually perfect time sharing in dual-task performance: Uncorking the central cognitive bottleneck. Psychological Science: Special Issue:, 121(2), 2101-2108.

7. Kieras, D. E., Meyer, D. E., Mueller, S., & Seymour, T. (1999). Insights into working memory from the perspective of the EPIC architecture for modeling skilled perceptual-motor and cognitive human performance. In Models of working memory: Mechanisms of active maintenance and executive control (pp. 183-223). New York, NY: Cambridge University Press.

8. Meyer, D. E., Glass, J. M., Mueller, S. T., Seymour, T. L., & Kieras, D. E. (2001). Executive-process interactive control: A unified computational theory for answering 20 questions (and more) about cognitive ageing. European Journal of Cognitive Psychology: Special Issue:, 13(1-2), 2123-2164.

9. Kieras, D. E., & Meyer, D. E. (1997). An overview of the EPIC architecture for cognition and performance with application to human-computer interaction. Human-Computer Interaction: Special Issue: Cognitive Architectures and Human-Computer Interaction., 12(4), 391-438.

10. Meyer, D. E., & Kieras, D. E. (1999). Precis to a practical unified theory of cognition and action: Some lessons from EPIC computational models of human multiple-task performance. In Attention and performance XVII: Cognitive regulation of performance: Interaction of theory and application (pp. 17-88 Attention and performance.). Cambridge, MA: The MIT Press.