In addition to its theoretical and applied implications mentioned in other sections, the response time-based recognition paradigm we developed is also important because it reveals constraints on human memory. We have been developing a computational model of performance focused on aspects of recognition memory that are under one’s conscious control, and aspects that are not. Within this model, we are able to bridge previous work on response competition, error monitoring, recognition memory, and motor control. In particular, we suggest that fast-familiarity and slow recollective processes in memory interact with response-conflict processes and motor system constraints during speeded recognition tasks.

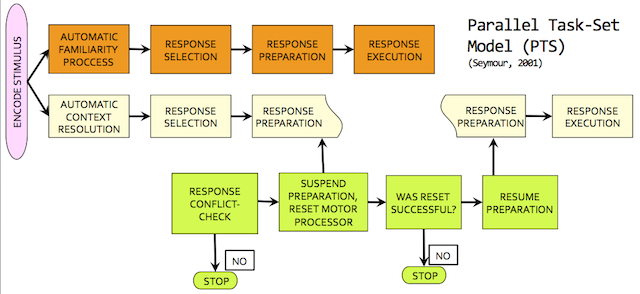

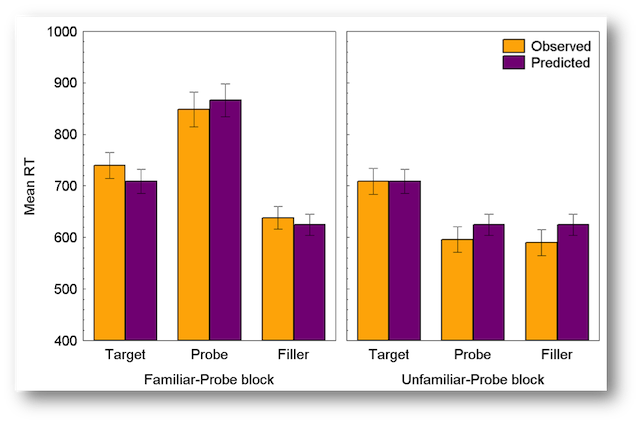

Within the response-time based guilty knowledge test (RT-GKT) on critical probe trials, a guilty suspect experiences competition between familiarity processes which indicate a “Yes” response (essentially a confession), and more recollective processes which signal a “No” response (to conceal crime knowledge). Two disparate responses being sent to the motor system would lead to confusion in motor planning for responses. In our Parallel Task-Set (PTS) model, we posit general processes that ensure the motor system remains unconfused by a process that facilitates one response and inhibits the other. The result is either a fast error, or a slow correct response, which matches with the empirical data for responses to crime stimuli in the RT-GKT. The benefits of this model are twofold: firstly, it allows researchers interested in the RT-GKT to understand which strategies can influence detection accuracy. Secondly, it offers an integrated theory of response competition and error monitoring that is applicable to other basic cognitive tasks. This work argues that many current models of recognition memory are insufficient because they consider memory processes in isolation of the control and motor processes with which (we claim) they interact. The PTS model has been implemented as a computational cognitive model/simulation that produces data very similar to human data (Seymour, 2001).

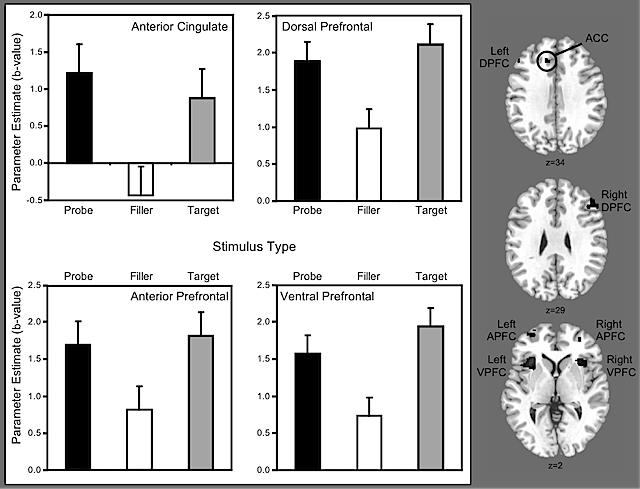

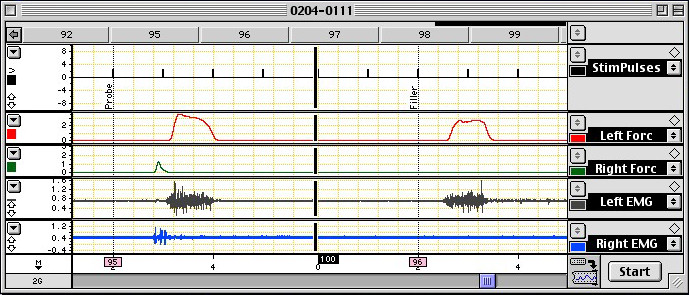

Through a collaboration with a colleague, Eric Schumacher, we have been able to provide compelling evidence for the PTS model’s predictions. We have collected data from matching functional magnetic resonance imaging [fMRI; collected at Georgia Tech] (Seymour & Schumacher, 2008) and electromyography [EMG; motor neuron recordings collected at UCSC] (Schumacher, Seymour, & Schwarb, 2010) experiments clearly showing that critical probe stimuli lead not only to the activation of brain areas associated with response conflict, but to a discrete motor activation of the arm associated with the “Yes” response that is then inhibited prior to the production of a “No” response by the other arm.

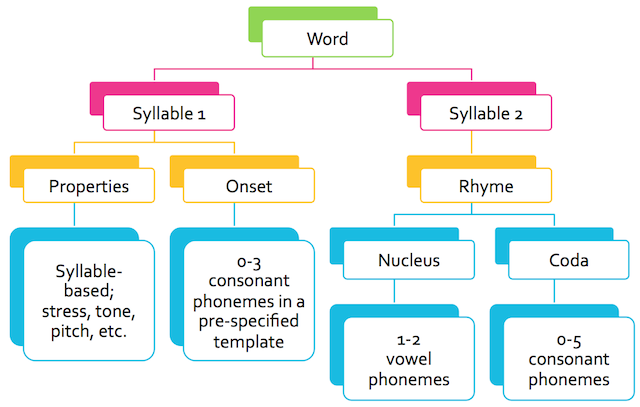

After Alan Baddeley’s early presentation of his working memory model, a great deal of research by others began that continues today. Although the model has held up surprisingly well, controversy surrounds the effects of phonological complexity (the number of phonemes and syllables in a word) and articulatory duration (the time it takes to say a word) in the serial recall task. By thinking of words as motor programs represented in memory as hierarchical linguistic structures (hierarchy shown above), and mapping the individual phonemes onto the state of various vocal articulators, we were able to take a significant step towards resolving this controversey. Although the field was dominated by contradictory and confusing findings, we suspected that the confusion was in part due to inadequate and inconsistent measurement of the phonological similarity and complexity of words. A key part of this work was the development a detailed and phonologically accurate tool for measuring the phonological similarity of spoken words, called Psimetrica. This tool, created by Shane Mueller, was then used in multiple experiments to unravel the controversy surrounding serial memory span. Results not only support Baddeley’s original formulation, but disarmed previous criticisms based on inadequate phonological measurement of word complexity (Mueller, Seymour, Kieras, & Meyer, 2003).